Just three years ago, asking ChatGPT to write an email felt like magic. Today, that same act feels almost quaint. In the brief span since generative AI burst into public consciousness, we’ve moved from marveling at party tricks to relying on infrastructure. AI is no longer a browser-based novelty—it’s becoming the background hum of modern life .

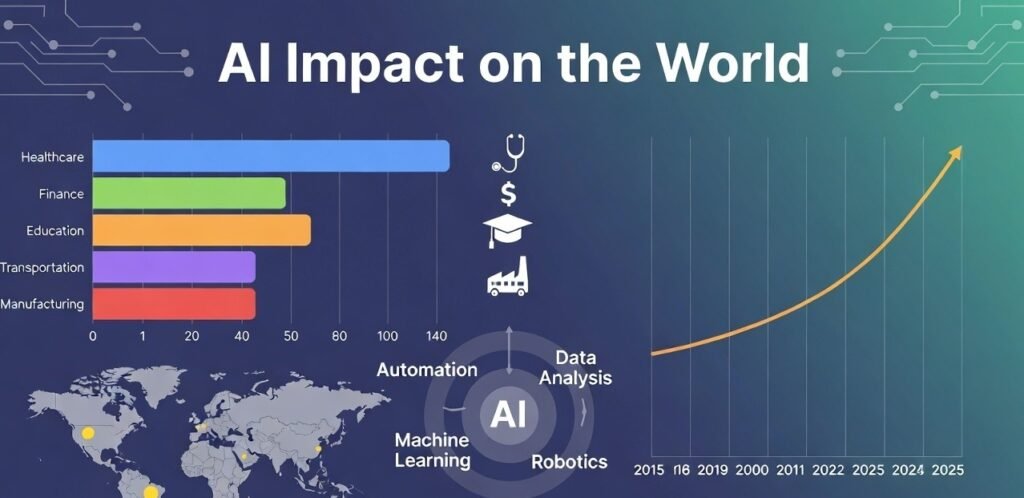

The numbers are staggering. Companies globally spent $1.8 trillion on AI last year alone . Nearly two-thirds of people across developed and developing countries expect to use AI in education, health, or work within the next year . And those who’ve already adopted it report productivity gains averaging 11.5%—alongside a 4% net reduction in workforce .

But statistics only capture the surface. The real story of how artificial intelligence is changing the world is far more human. It’s the high school teacher in San Diego clearing her grading queue in days instead of weeks by outsourcing initial assessments to ChatGPT . It’s the clinician who can finally look her patient in the eye because an ambient scribe handles the documentation drudgery . It’s the blind person gaining independence through AI-generated image descriptions, and the rural farmer accessing agricultural advice through a feature phone .

It’s also the middle schoolers in New Hampshire using generative tools to strip clothes off classmates’ photos, leaving a community scrambling for policy responses . It’s the call center worker in Manila whose job was automated by a system that answers in 35 languages at 3 a.m. . It’s the entry-level graduate facing a 5% drop in available positions as companies let attrition eat their junior ranks .

The transformation artificial intelligence is bringing to the world isn’t a single story. It’s millions of them, unfolding simultaneously, each carrying both promise and peril. This guide explores that transformation across the domains where it matters most: how we work, learn, heal, connect, and govern ourselves. We’ll look at what’s real versus what’s hype, who benefits versus who gets left behind, and—most importantly—what we can do about it.

Because artificial intelligence changing the world isn’t a prediction. It’s already happening. The only question is whether we shape it or are shaped by it.

Part 1: The Workplace—Productivity Gains and Painful Tradeoffs

Ask corporate leaders what AI means for their businesses, and you’ll hear two words repeatedly: efficiency and disruption. Both are accurate.

The Productivity Dividend

Companies that have been using AI for at least a year report an average productivity increase of 11.5% . That’s not incremental improvement—it’s transformative. These gains cut across industries: consumer goods, real estate, transportation, healthcare, and automotive sectors all seeing double-digit lifts .

What does this look like in practice? A payments company in Sweden touts its AI customer-service system as carrying the work of 700 people . A fashion retailer deploys “Ask Ralph,” an AI stylist that provides outfit recommendations, understands nuanced queries, and even discerns customer sentiment to refine suggestions . In software development, teams are moving from low-code to no-code environments, with AI handling routine programming while humans focus on architecture and complex problems .

For knowledge workers, the shift means offloading drudgery. The clinician who used to spend hours on documentation can now practice medicine. The teacher buried in grading can focus on lesson planning and student interaction. The executive drowning in email can reclaim time for strategic thinking.

The Workforce Reality

But there’s another side to this story. Across the five sectors most exposed to AI adoption, companies reported eliminating 11% of jobs and leaving another 12% unfilled. New hires partially offset this—about 18%—resulting in a net 4% workforce reduction globally .

The pattern is stark: job losses concentrate among entry-level positions, while experienced workers often see productivity gains . Employment for workers aged 22-25 in highly automatable occupations has fallen by roughly 5% in recent years . The work that traditionally served as an apprenticeship—routine tasks that taught junior employees the ropes—is increasingly handled by algorithms.

This isn’t necessarily net job destruction. History shows that technology can create more jobs than it eliminates, provided demand for goods and services is elastic. The U.S. today has 11 times more programmers and eight times more commercial airline pilots than it did in 1970, despite massive productivity gains in both fields . But those new jobs look different from the old ones. They require different skills, offer different wages, and distribute differently across geography and demographics.

What This Means for You

If you’re a worker, the age of AI demands a shift in mindset. The question isn’t whether AI will affect your job—it already is. The question is whether you’re positioned to work alongside it or be replaced by it.

- Focus on judgment tasks. AI can generate, summarize, and predict. It struggles with context, nuance, and accountability. The value you add lies in deciding what’s worth doing, not just doing it.

- Cultivate adaptability. The half-life of specific technical skills is shrinking. The ability to learn, unlearn, and relearn is becoming the meta-skill that matters most.

- Watch for elastic fields. Jobs in sectors with elastic demand (where productivity gains lead to more, not fewer, jobs) include programming, healthcare, education, and creative services . Agriculture, by contrast, has inelastic demand—productivity gains there have reduced U.S. farm employment fourfold since 1940 .

Part 2: Education—The Classroom Transformed

Of all the domains AI touches, education may be the most emotionally charged. Few institutions are as foundational to our sense of social stability, and few are being disrupted as quickly.

The Scale of Change

Eighty-four percent of high school students now use AI for schoolwork . That’s not a niche trend—it’s near-universal adoption. In the UK, student AI tool use jumped from 66% to 92% in a single year . These aren’t kids cheating their way through homework (though some are). They’re learners discovering that AI tutors can explain concepts in ways textbooks can’t, at hours when teachers aren’t available.

Khan Academy’s Khanmigo reached 1.4 million students in its first year . These systems adapt to individual learning styles, provide instant feedback, and never tire of explaining the same concept for the fifteenth time. Research shows that one-on-one tutoring can double learning speed—and AI tutors have the potential to scale that benefit to every child, not just those who can afford private instruction .

The Double-Edged Sword

But the same tools that empower learning also enable shortcuts. The high school student who uses ChatGPT to write their essay isn’t developing writing skills. The middle schooler who discovers they can generate convincing excuses for late assignments is learning something about manipulation, not responsibility.

Beyond cheating, there are darker possibilities. Generative AI can create “deepfake” images of classmates, as happened in New Hampshire when students used tools to strip clothes off peers’ photos . Schools and communities are scrambling for policy responses to situations that didn’t exist three years ago.

There’s also concern about cognitive atrophy. If AI handles research, summarization, and even critical analysis, what happens to students’ ability to do these things themselves? Some worry that reliance on AI tools may weaken the very thinking skills education is meant to build .

The Equity Dimension

AI in education also raises profound equity questions. Students in well-funded schools with reliable internet and tech-savvy teachers will likely benefit most from AI tools. Those in under-resourced schools may fall further behind. The performance gap between students in high-poverty schools and their peers is already stark—AI could widen it if deployment favors the already advantaged .

Conversely, AI could help close gaps if deployed thoughtfully. Students in remote areas could access world-class instruction. Those with learning disabilities could receive personalized support. English language learners could get real-time translation and scaffolding.

The outcome depends on choices made now, by educators, administrators, and policymakers.

What This Means for Parents and Students

- Embrace AI as a tool, not a crutch. Teach students to use AI for research, brainstorming, and feedback—but not to outsource their thinking.

- Advocate for thoughtful school policies. Blanket bans on AI are unenforceable and ignore the reality that students will use these tools anyway. Better to teach responsible use.

- Watch for equity gaps. If your school district isn’t talking about AI access and training, ask why.

Part 3: Healthcare—From Reactive to Predictive

Healthcare may be where AI’s potential for good is most vivid—and where the stakes of getting it wrong are highest.

Diagnostic Breakthroughs

The numbers are striking: FDA has already approved more than 250 AI applications for medical scanning . In head-to-head comparisons, AI achieves about 50% diagnostic accuracy in some domains, compared to 40% for human doctors. Together, humans and AI achieve 60%—better than either alone .

This matters because medical errors are deadly. An estimated 200,000 North Americans die annually from misdiagnosis . Even modest improvements in accuracy translate to lives saved.

AI is also seeing what humans miss. Machine learning models can detect patterns in retinal scans that predict cardiovascular risk—information doctors couldn’t extract without algorithmic help . In radiology, AI flags suspicious nodules that human eyes might overlook after a long shift.

The Drug Discovery Revolution

Perhaps even more transformative is AI’s role in creating new treatments. DeepMind’s AlphaFold, which predicts protein structures, was awarded the Nobel Prize in Chemistry . Dozens of AI-designed drugs are now in development pipelines . While none have yet completed clinical trials, the acceleration of discovery is unprecedented.

Traditional drug development takes decades and billions of dollars. AI can simulate molecular interactions, predict side effects, and identify promising candidates in months. If even a fraction of these candidates succeed, the impact on human health will be enormous.

The Clinical Reality

Despite these breakthroughs, adoption in clinical settings remains slow. Only about 9% of healthcare organizations currently use AI, though that’s projected to reach 15% within six months . The barriers are significant: liability concerns, integration costs, workflow disruption, and the need to convince skeptical doctors that algorithms deserve trust.

There’s also the question of verification. Very few AI tools have been evaluated through randomized controlled trials—the gold standard for medical evidence . Without that validation, widespread adoption remains cautious.

What This Means for Patients

- Ask questions. If your provider uses AI in diagnosis or treatment planning, ask how it works and what evidence supports it.

- Recognize AI’s limits. Algorithms can suggest, but humans must decide. The best outcomes come from collaboration, not replacement.

- Watch for equity issues. AI trained on homogeneous data may perform poorly for underrepresented groups. Ensure your care accounts for this.

Part 4: Information and Media—Trust in Crisis

If healthcare shows AI’s promise, information shows its peril. The same technology that tutors students and diagnoses disease also generates convincing falsehoods at scale.

The Misinformation Machine

Generative AI can produce text, images, audio, and video that are increasingly indistinguishable from reality. A manipulated video can ruin a reputation long before anyone proves its origin . A fabricated news story can sway an election before fact-checkers respond.

The concern isn’t hypothetical. Polarization in online discourse is already severe; AI-generated content could accelerate echo-chamber effects, radicalize vulnerable individuals, and undermine shared reality . When anyone can create convincing fake evidence of anything, truth becomes a matter of preference rather than verification.

The Response Framework

Researchers and policymakers are developing responses. The “Bright Internet” framework proposes six pillars for ethical AI, including origin responsibility (making content traceable to accountable sources) and delivery responsibility (platforms ensuring balanced, secure dissemination) .

Technical solutions include generative watermarking—imperceptible tags baked into AI-generated content that survive editing and compression. Major AI platforms are integrating these tools, though their effectiveness against determined bad actors remains uncertain.

The Human Element

Ultimately, information integrity depends on human judgment. Media literacy—the ability to evaluate sources, question claims, and seek verification—is becoming as fundamental as reading and writing. Schools, families, and communities must prioritize these skills.

What This Means for Everyone

- Verify before sharing. Assume nothing, question everything. If a story provokes strong emotion, that’s a reason to be skeptical.

- Support quality journalism. Reliable news organizations provide verification, context, and accountability that algorithms cannot.

- Teach media literacy. Whether you’re a parent, teacher, or citizen, help others learn to navigate the information environment critically.

Part 5: The Global Divide—Winners and Losers

Perhaps the most important truth about AI changing the world is that it isn’t changing it evenly. The benefits and burdens of this technology are distributed along existing lines of inequality—and may widen them.

The Capability Gap

High-income countries are far better positioned to benefit from AI . They have the infrastructure (reliable electricity, broadband networks), the human capital (skilled workers, research universities), and the institutional capacity to develop and deploy AI systems.

Low-income countries, by contrast, often lack basics. Roughly one-quarter of Asia-Pacific’s population remains offline . Fewer than one in five rural residents can perform basic spreadsheet calculations . Women in South Asia are up to 40% less likely than men to own smartphones . Into this landscape AI arrives—not as a leveler, but as an amplifier of existing advantages.

The Vulnerability Gap

While benefits concentrate at the top, risks radiate downward. Workers in developing countries face automation of the very tasks that provided entry into global supply chains . Women’s jobs are nearly twice as likely as men’s to face high AI exposure .

There are also environmental vulnerabilities. Data center electricity consumption may nearly triple by 2030 . Countries with fragile, fossil-fuel-based power systems risk hosting energy-hungry “data farms” that serve wealthy nations while bearing environmental costs themselves.

The Opportunity for Leapfrogging

But there’s another possibility. Just as mobile phones allowed developing countries to bypass landline infrastructure, AI could enable leapfrogging in education, healthcare, and public services .

In agriculture, AI could help small farmers optimize planting, irrigation, and harvesting—potentially transforming productivity for the 2.5 billion people who depend on smallholdings . In healthcare, AI diagnostic tools could reach remote clinics that lack specialists. In education, AI tutors could supplement under-resourced schools.

Whether AI widens divides or narrows them depends on deliberate choices by governments, international institutions, and technology companies.

What This Means for Policymakers and Citizens

- Invest in foundational infrastructure. AI benefits require electricity, connectivity, and devices. These are prerequisites, not luxuries.

- Build human capital. AI literacy, digital skills, and critical thinking are essential for participation in the AI economy.

- Pursue regional cooperation. Individual countries may lack resources to build full AI ecosystems, but regional compute and data commons can pool capabilities .

- Tailor strategies to local capacity. Lower-income countries should focus on basic connectivity and offline-capable AI; middle-income countries can scale proven pilots; higher-income countries should lead on standards and safety .

Part 6: The Next Frontier—From Tools to Collaborators

Geoffrey Hinton, the Nobel laureate often called the “godfather of AI,” offers a framing that captures where we’re headed. In a recent Berlin address, he said: “AI is no longer just giving answers—it’s starting to think, generate data, and execute tasks” .

This shift—from passive tool to active collaborator—has three dimensions.

From Hallucination to Reasoning

Early AI models were famously prone to “hallucinations”—confidently stated falsehoods. That’s changing. Newer models incorporate self-verification: after generating output, they check it against facts and logic, correcting errors before presenting results .

This isn’t perfect accuracy, but it’s a qualitative shift. AI is beginning to demonstrate something like reasoning—not by converting language to logical symbols, but by finding coherent pathways through high-dimensional representations of meaning .

From Data-Fed to Self-Learning

The era of scaling laws—where bigger models trained on more data consistently improved—is ending. We’re approaching the limits of available human-generated data .

The next generation of AI will generate its own training data through self-play and self-verification. AlphaZero demonstrated this in games, learning superhuman Go without any human games. Similar approaches are now being applied to mathematics, science, and language .

From Tool to Agent

The buzziest word in AI today is “agent”—systems that don’t just respond to prompts but pursue goals independently . An agent can understand a task, break it into steps, execute those steps, and adapt when things go wrong.

This means AI that books your travel without asking about every detail. AI that negotiates with other AI systems. AI that takes responsibility for outcomes, not just outputs .

For humans, this changes the relationship. You shift from executor to director, from doing the work to choosing which work gets done. That’s liberating—and unsettling. It raises questions about control, accountability, and the boundaries of delegation.

What This Means

- Expect more capable AI. Reasoning, self-learning, and agency will make AI useful in domains where it currently struggles.

- Prepare for oversight challenges. Agentic AI requires new frameworks for monitoring, control, and accountability.

- Focus on direction, not execution. Your comparative advantage shifts to deciding what matters, not how to do it.

Conclusion

Let’s step back and survey the landscape we’ve covered.

Artificial intelligence is changing the world in ways both visible and invisible. It’s in the classroom, the clinic, the boardroom, the farm, and the news feed. It’s creating productivity gains that boost corporate profits and efficiency gains that eliminate jobs. It’s enabling breakthroughs in drug discovery and generating disinformation that erodes trust. It’s offering tools that could empower the world’s poorest and infrastructure that could deepen the world’s divides.

The common thread across all these domains is that AI is not destiny. It’s a set of capabilities whose impacts depend on choices—choices made by technologists, policymakers, business leaders, and citizens.

The evidence from history is clear: general-purpose technologies like electricity and computing transformed society, but they did so through decades of institutional adaptation, not overnight revolution. The same will be true for AI. We have time to shape its trajectory, provided we use it wisely.

That means investing in human capital alongside technical infrastructure . It means designing AI systems that augment human capabilities rather than just replacing labor . It means building metrics and methods to evaluate AI innovations before deploying them at scale . It means ensuring that access to AI’s benefits doesn’t replicate the patterns of the “Great Divergence” that left so much of the world behind during the Industrial Revolution .

And it means recognizing that the most important questions about AI aren’t technical. They’re human. What kind of work do we value? What kind of education prepares people for flourishing lives? What kind of healthcare system do we want? What kind of information environment sustains democracy? What kind of global order shares prosperity rather than concentrating it?

AI doesn’t answer these questions. It forces us to answer them more clearly.

The technology will keep advancing. Models will get smarter. Agents will get more capable. Integration will get deeper. But whether those advances translate into human progress depends on us—on our wisdom, our values, and our willingness to act.

The age of AI is here. The question isn’t whether it will change the world. It’s whether we’ll change it for the better.

Leave a Reply